Dynamically Evolving Cognitive Architecture

The structure of the Voice First world is held together by Intelligent Agents. Intelligent Agents use AI (Artificial Intelligence) and ML (Machine Learning) to decode volition and intent from an analyzed phrase or sentence. The AI in most current generation systems like Siri, Echo and Cortana focuses on speaker independent word recognition and to some extent the intent of predefined words or phrases that have a hard coded connection to a domain expertise.

Viv uses a patented [1] exponential self learning system as opposed to the linear programed systems currently used by systems like Siri, Echo and Cortana. What this means is that the technology in use by Viv is orders of magnitude more powerful because Viv’s operational software requires just a few lines of seed code to establish the domain [2], ontology [3] and taxonomy [4] to operate on a word or phrase.

In the old paradigm each task or skill in Siri, Echo and Cortana needed to be hard coded by the developer and siloed in to itself, with little connection to the entire custom lexicon of domains custom programmed. This means that these systems are limited to how fast and how large they can scale. Ultimately each silo can contact though related ontologies and taxonomies but it is highly inefficient. At some point the lexicon of words and phrases will become a very large task to maintain and update. Viv solves this rather large problem with simplicity for both the system and the developer.

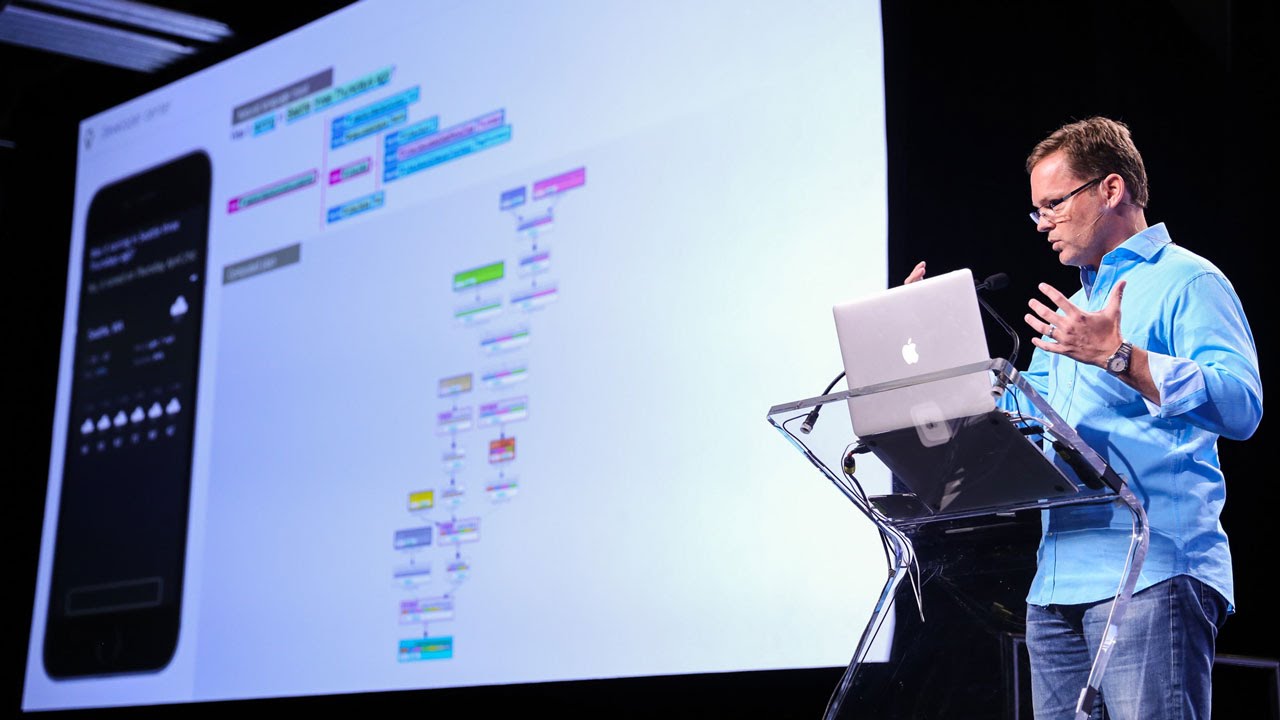

Specimen of the developer console identifying domain intent programing.

Viv’s team calls this new paradigm the “Dynamically evolving cognitive architecture system”. There is limited public information on the system and I can not address any private information I may have access to. However the patent, “Dynamically evolving cognitive architecture system based on third-party developers” [5] published on December 24th, 2014 offers an incredible insight on the future.

Learn More(Source) https://www.quora.com/How-does-dynamic-program-generation-work

Patent: